Let me steal the quote with which the first chapter starts.

History is not the past but a map of the past, drawn from a particular point of view, to be useful to the modern traveler.

Henry Glassie, Historian

Engineers nowadays have to identify and mitigate issues on their applications before there is a meltdown, meaning we cannot just wait for the system to fail and do a postmortem. This is why it is important to not only have the data stored somewhere, but we need to know when something is going to happen too.

The data needs to be correlated organized, ready to be analyzed by computers. OpenTelemetry does this. It turns individual logs, metrics and traces into an understandable graph of information.

In the book they focus on observability of distributed systems. They define a distributed system as,

A system whose components are located on different networked computers that communicate and coordinate their actions by passing messages to on another.

Meaning that not only your microservice app, is a distributed system but your monolith that talks to an API to get the weather or something.

There are two components for distributed systems (at a high level of course):

Physical (servers, ram, cpu, nics) and logical (api endpoints, dbs). Basically everything that constructs the system.

Requests that orchestrate the resource to achieve something, usually done by the user. (booking a flight, loading a website)

So, how do we observe a distributed system? Well, it needs to emit telemetry. Telemetry is data that describes what your system is doing or what is happening inside it. Without this the system is a black box.

There are two types of telemetry.

How the user interacts with a system. How long did he/she hovered over the "buy now" button.

Statistics about how the system components are behaving. How long it took for the button to load.

Behind telemetry, there are different types of signals, tbh I did not fully get this part, but I think that a signal could be a log in an application, another one the cpu usage of the system, and so on.

Each signal now has two parts (feel overloaded with terms already?)

Code that emits the data

Responsible for sending the data over the network to an analysis tool.

It is important to mention that the system that emits the data and the system that analyzes the data are separate from each other.

Telemetry is the data itself. Analysis is what you do with the data.

Telemetry + Analysis = Observability

It is called telemetry because the first remote diagnostic system transmitted data over telegraph lines. It was first developed to monitor power plants and public power grids. (Which are in fact distributed systems)

The first form of telemetry was logging. Logs are text based messages that tell you something that happened in the system. Devs improved how to store and search these logs.

At the end of the day, logs are just recorded events. One might say that there is a file that could not be created because a system does not have space. This is cool, but wouldn't it be better to track the storage too? Those are metrics.

Metrics, are a compact representation of system state, say, memory, cpu usage, number of logged users.

A third form of telemetry came, distributed tracing. Instead of just tracking events (as logs) this tries to track whole operations, when it starts and when it ends, as well as on which computer it happened. Their usefulness was limited due to the amount of resources this type of systems need.

The three primary forms of telemetry are

Usually, the solutions for each are developed as isolated systems, you have a system for log collection, transmission and analysis, a different one for metric collection, transmission and analysis. These systems would not talk to each other, and would be isolated. This is not ideal.

Why is this not ideal? Since they do not know about each other, it becomes hard to find correlations between them.

Systems do not have issues that are scoped to just logs or metrics. Since systems are composed of transactions and resources, they can only have transactions and resources issues, they do not limit themselves to what you are tracking with logs, or with metrics, you need the context.

Telemetry comes handy here, you can use metrics, logs and traces to understand your system. Say, for example, logs and traces can help you reconstruct a transaction, metrics what was happening at an specific moment in time; useful observations do not come from isolated data points, we need the context/correlation of these telemetry forms.

The way we find what is wrong with a system is by seeing how these forms interact with each other.

So if your 3 systems are vertically build, you need to be switching between these "tabs" to try and correlate them. Which is not an easy way to find the issue.

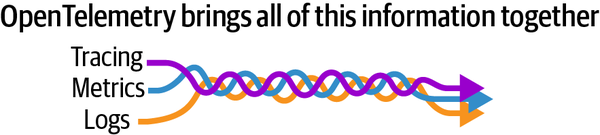

OpenTelemetry solves this issue, in it, the forms are not standalone products, they become a "single braid of data"

Instrumentation is the process of adding observability code to a service, there are two types.

You literally modify the code to generate signals

You use an agent to collect the signals without any need to touch the code

OpenTelemetry concerns itself with 3 signals. (Which are the telemetry forms we were discussing previously).

traces

metrics

logs

A trace is a way to think about units of work in a distributed system. What this mean is that they represent a full process in your system, from beginning to end.

The book suggests to think about it as,

A set of log statements that follow a well-defined schema

Each trace is a collection of related logs, called spans

Metrics record the system state. These refers to information like memory, cpu usage, number of users logged in to the system, stuff like that.

They are cheap and accurate, the issue is that it is hard to correlate them with stuff that is happening in the end-user specific transactions.

OpenTelemetry metrics have semantic meaning, for example, a request recording the size of the payload.

OpenTelemetry also allow for metrics to be linked with other signals, so they can have more context.

These are the most basic way for a computer to tell you what it is doing. OpenTelemetry tries to adapt to whatever you are already using.

What OpenTelemetry adds to the logs is that it gives context with traces and metrics. So they do not happen in a vacuum but tell a story.

A signal will give you some information about a record that happen but it would be useless without context.

Context is what make the signals relevant. There are three types of contexts in OpenTelemetry

Time

Attributes

Context Object itself

There is an OpenTelemetry context specification that dictates how context is sent between two parts of the system.

Any service with OpenTelemetry tracing enables will create and use data to pass this context to the next service, process or whatever follows in the pipeline.

Propagators are how the data between components is actually sent. When a request starts OpenTelemetry will assign and id that will travel with the request through the whole process to help it keep this context.

Every piece of telemetry in OpenTelemetry has attributes. They are simply key-value pairs that describe something about that piece of telemetry.

The keys most be unique, and the max number of attributes is 128 due to resource constrains. (See Cardinality Explosion for more info.)

Resources are like attributes but they are immutable, once created they cannot be modified.

I think there are like a standard of keys and values (attributes) for OpenTelemetry, to not be super messy when using different cloud providers, runtime, stuff like that.

They have a protocol (OpenTelemetry Protocol OTLP) that offers a single wire format. Which is fancy speak for how is data stored and sent across the network.